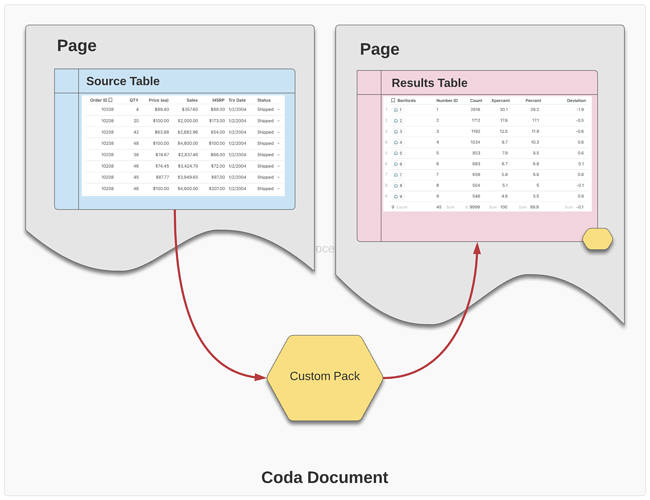

As I continue to learn more about building custom packs, a specific pattern has emerged a few times. The custom pack is design to read a table, perform various analytics and other processing, and then write a completely new results table. Like this…

This is similar to creating a new view of the data, but the resulting schema is quite different. Depending on the objectives of the view, the results schema is likely very different from the source data it processes. A good example is a Benford’s Law analysis to detect if any of the data is fabricated.

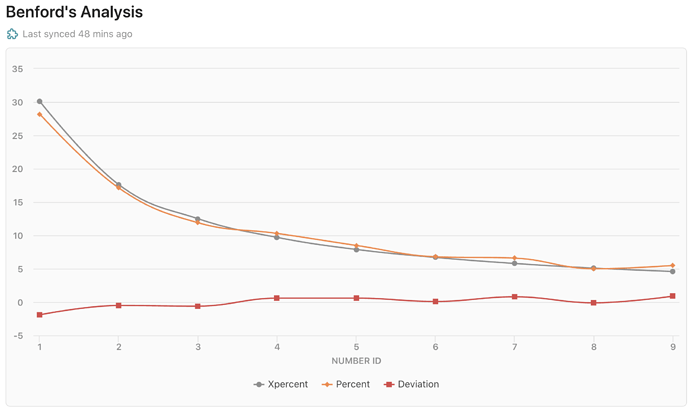

A custom pack to do this must read a given column (or a collection of columns) and produce a chart showing how the actual data compared to Benford’s predicted model of the data. The Xpercent (gray) curve is Benford’s model - the predicted percentages of numbers beginning with a specific number 1 through 9. The Percent (orange) curve is the actual data. In a data set that has not been “cooked”, the curves should be roughly equal. Large deviations (red curve) indicate the numbers in the column analyzed are probably fabricated - i.e., not naturally occurring.

The beauty of baking this into a pack is the effortless use of Benford’s law to detect fraud in data. In just a few seconds, you can install and configure this pack.

The Pack Pattern

The pattern used for this is pretty straight-forward once you’ve created it a half-dozen times.  Soon I’ll publish a simplified version of the pattern that could be easily applied to other objectives.

Soon I’ll publish a simplified version of the pattern that could be easily applied to other objectives.

It requires a single parameter; an array representing the number values to be analyzed; in this case a column of insurance policy values. This could be any column of numbers and the pack could also support number values stored as strings but that would require a slight modification to the pack itself.

//

// define the parameters

//

const numberArray = coda.makeParameter({

type: coda.ParameterType.NumberArray,

name: 'Numbers',

description: 'the numbers you want to calculate',

});

The schema that this pack exports is very simple.

//

// Benford's schema

//

const BenfordsSchema = coda.makeObjectSchema({

type: coda.ValueType.Object,

id: "numberID",

primary: "numberID",

properties: {

numberID : {type: coda.ValueType.String},

count : {type: coda.ValueType.Number},

xpercent : {type: coda.ValueType.Number},

percent : {type: coda.ValueType.Number},

deviation : {type: coda.ValueType.Number},

}

});

The processing is a little more complex, but this gives you some insight into the Benford model.

//

// process the data

//

execute: async function ([numberArray], context) {

//

// summarize the rows into the numberic counts

//

let benfordCounts = {};

let aXPercent = [0.0,30.1,17.6,12.5,9.7,7.9,6.7,5.8,5.1,4.6];

for (var i = 0; i < numberArray.length; i++)

{

var thisDigit = numberArray[i].toString()[0];

if (thisDigit != "0") {

benfordCounts[thisDigit] = (benfordCounts[thisDigit]) ?

{

"numberID" : thisDigit,

"count" : benfordCounts[thisDigit].count + 1,

"xpercent" : aXPercent[thisDigit],

"percent" : 0.0,

"deviation" : 0.0

} :

{

"numberID" : thisDigit,

"count" : 1,

"xpercent" : aXPercent[thisDigit],

"percent" : 0.0,

"deviation" : 0.0

};

}

}

//

// compute the percentages

//

for (var j in benfordCounts)

{

benfordCounts[j].percent = Number((benfordCounts[j].count / numberArray.length) * 100).toFixed(1);

benfordCounts[j].deviation = Number(benfordCounts[j].percent - benfordCounts[j].xpercent).toFixed(1);

}

//

// transform the data frame to match the schema

//

const aResults : string[] = [];

for (var j in benfordCounts)

{

aResults.push(benfordCounts[j]);

}

console.log(aResults);

return {

result: aResults,

continuation: undefined,

};

},